2026 · Preprint Neuralset: a high-performing Python package for Neuro-AI

Jean-Rémi King, Corentin Bel, Linnea Evanson, Julien Gadonneix, Sophia Houhamdi, Jarod Lévy, Joséphine Raugel, Andrea Santos Revilla, Mingfang Zhang, Julie Bonnaire, Charlotte Caucheteux, Alexandre Défossez, Théo Desbordes, Pablo Diego-Simón, Shubh Khanna, Juliette Millet, Pierre Orhan, Saarang Panchavati, Antoine Ratouchniak, Alexis Thual, Teon L. Brooks, Katelyn Begany, Yohann Benchetrit, Marlène Careil, Hubert Banville, Stéphane d'Ascoli, Simon Dahan, Jérémy Rapin

FAIR · Meta AI · 2026

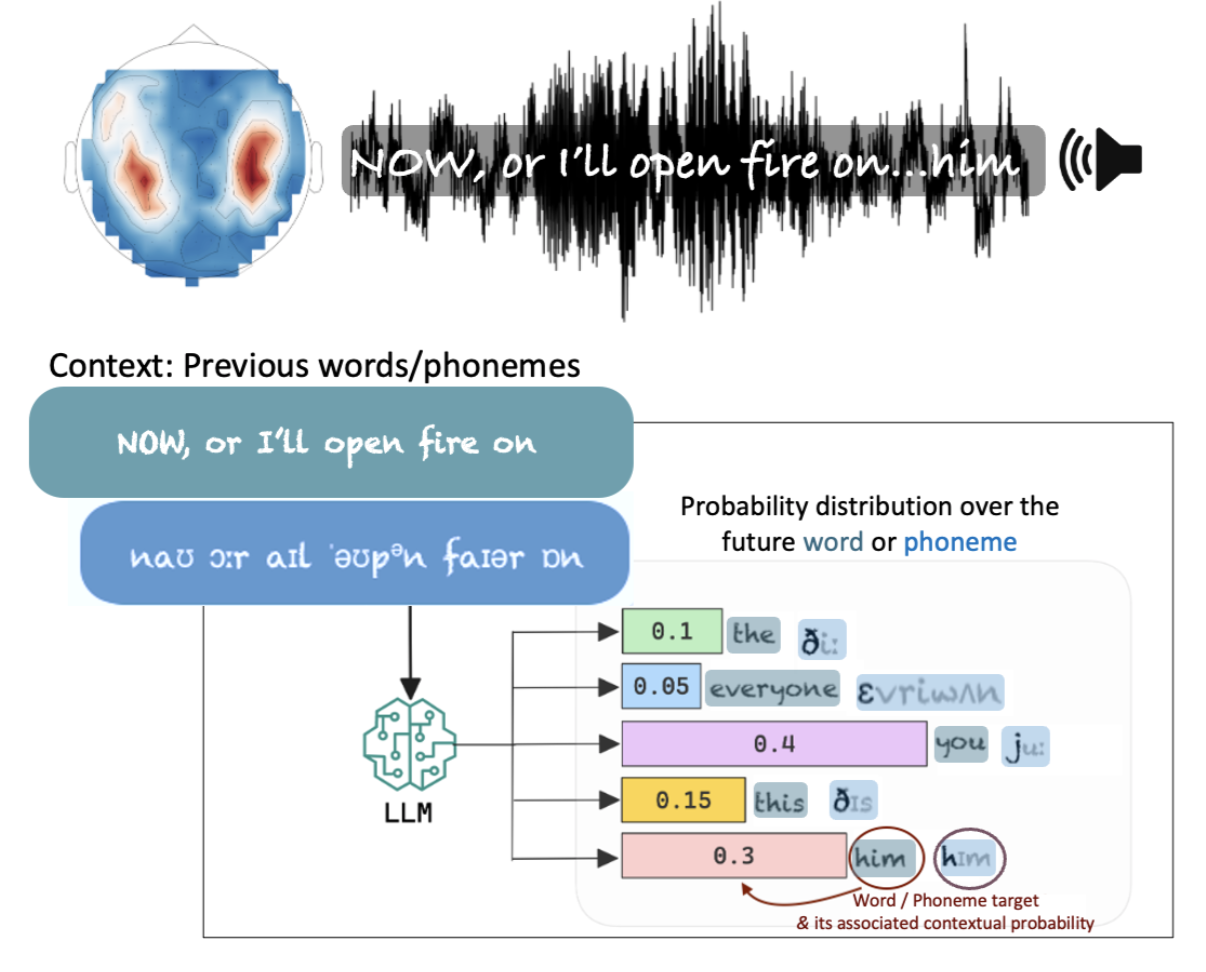

Neuralset is a unified Python package and benchmark for neuro-AI: it standardises datasets, metrics, and baselines so deep-learning models can be directly compared on how well they predict neural activity.